Building an A.I. News Agent

📝 Introduction

The Information Overload Crisis

Picture this: It's 7 AM, and you're already drowning. Your Twitter feed is exploding with crypto market updates, your Telegram channels are buzzing with AI breakthroughs, and your RSS reader shows 847 unread articles. Sound familiar?

In today's hyper-connected world, staying informed is becoming impossible. The average person consumes numerous newspapers worth of information daily, yet retains less than 1% of it. Meanwhile, the most successful investors, entrepreneurs, and technologists seem to have a crystal ball, always staying ahead of trends.

What if I told you that crystal ball is actually a system?

Today, we're going to build something extraordinary: an AI-powered content aggregation system that doesn't just collect information but understands it, analyzes it, and transforms it into actionable insights. This isn't your typical tutorial project. By the end of this guide, you'll have created a production-ready system that:

- Scrapes multiple data sources (Twitter/X, Telegram, RSS feeds) intelligently

- Analyzes content with AI (GPT-4, Claude, Gemini, Ollama)

- Generates structured insights that cut through the noise and filter

- Automates social media posting directly posting insights to Twitter

- Scales to handle millions of data points with intelligent caching

Got any questions? Find us on Discord or reach out on Twitter.

What You'll Build: The CL Digest Bot

We're building the Compute Labs Digest Bot—a sophisticated system that transformed how Compute Labs stays ahead in the rapidly evolving AI and crypto landscape. It's a real system processing thousands of data points daily and generating insights that drive business decisions. Also, if that's not enough for you, continue building on to turn the Bot into an Agent you can control through natural language.

🎯 Key Features We'll Implement

Smart Data Collection:

- Multi-platform scraping with rate limiting

- Intelligent content filtering and quality scoring

- Automated deduplication and source attribution

AI-Powered Analysis:

- Advanced prompt engineering for different content types

- Token optimization strategies to manage costs

- Multi-model integration (OpenAI + Anthropic)

Automated Distribution:

- Bot posts your AI summarized news directly to Twitter

- Slack integration for team collaboration

- Video generation for social media

Production-Ready Architecture:

- Type-safe configuration management

- Comprehensive error handling and retry logic

- Docker containerization and deployment

Bonus

- Turn your digest bot into an A.I Agent

- Build natural language intent recognition

- Deploy a simple Chat UI to interact with your bot

💡 Who This Tutorial Is For

Perfect for:

- Intermediate developers comfortable with JavaScript/TypeScript

- Data enthusiasts interested in web scraping and automation

- AI builders wanting to integrate LLMs into real applications

- Entrepreneurs looking to automate content operations

You should know:

- JavaScript/TypeScript fundamentals

- Basic API concepts (REST, authentication)

- Command line basics

- Git version control

Don't worry if you're new to:

- AI/LLM integration (we'll cover everything)

- Web scraping techniques

- Social media APIs

- Docker and deployment

🚨 Before Getting Started

GitHub Repo: https://github.com/compute-labs-dev/cl-digest-bot-oss

This project is broken up into 12 chapters and designed for you to follow along. Each section has completed code that can be found on the respective branch of the repo chpt_1 for example is at https://github.com/compute-labs-dev/cl-digest-bot-oss/tree/chpt_1

If you are looking to just skip ahead and clone the completed build you can just fast-forward to the final branch here.

Technology is also constantly evolving, so if you discover any breaking changes we encourage you to try and find a fix then open a PR, there is now better time to become an Open Source Contributor!

Also, if you do decide to take on this build, drop us a note on twitter! We love to support A.I. builders and who knows, builiding in public might lead your A.I. bot to the next big seed round!

Chapter 1

The Foundation - Setting Up Your Digital Workshop

"Every expert was once a beginner. Every pro was once an amateur. Every icon was once an unknown." - Robin Sharma

Before we dive into the exciting world of AI and automation, we need to build a solid foundation. Think of this chapter as setting up your digital workshop—we'll install the right tools, configure our workspace, and establish patterns that will serve us throughout the entire project.

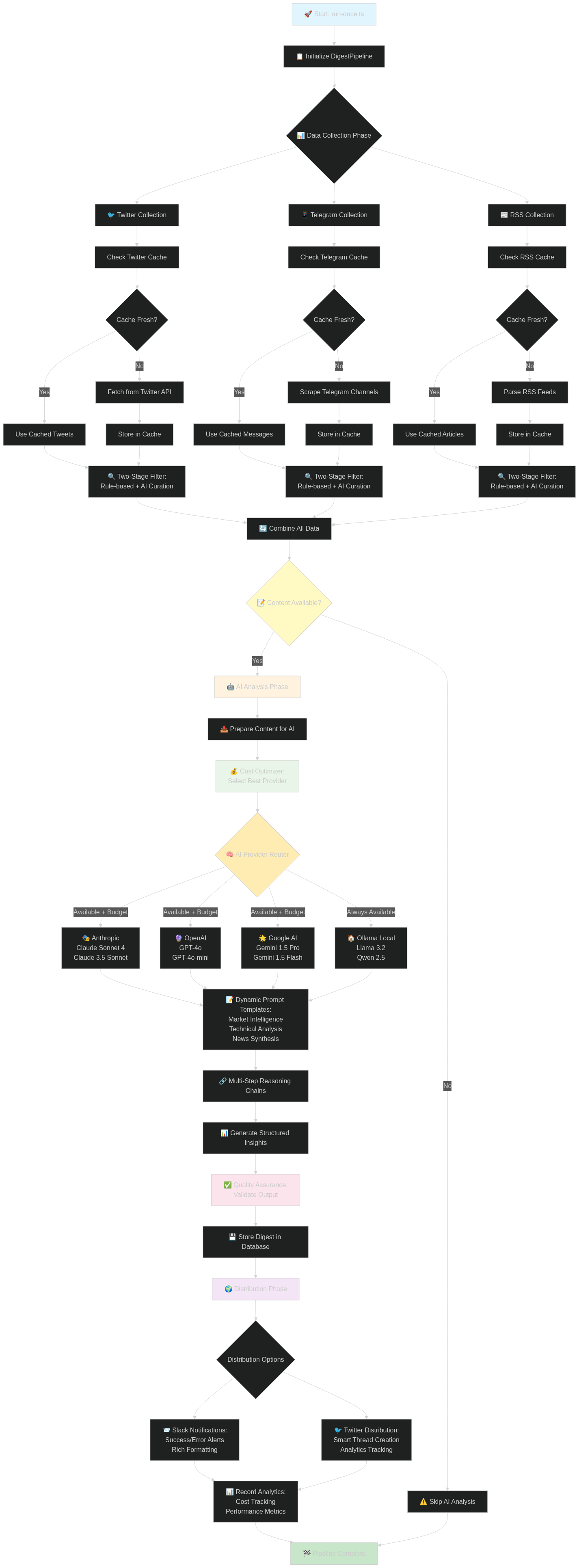

Quick Overview

To quickly visualize the type of pipeline we are attmempting to build, let's chart it out:

This build is not binding! You are encouraged to make it your own! Whether it's adding in a Video Generation step with a Voiceover reading your digest, or posting to Reddit instead of Twitter.

You can even build a front-end to display the digests and their source data. The possibilities are endless, make your build stand out!

🚀 Creating Your Next.js Project

Let's start with the foundation. We're using Next.js because it gives us:

- Server-side rendering for better performance

- API routes for backend functionality

- TypeScript support out of the box

- Excellent developer experience with hot reloading

Open your terminal and run:

# Create the project with all the modern bells and whistles

npx create-next-app@latest cl-digest-bot --typescript --tailwind --eslint --app --src-dir --import-alias "@/*"

# Navigate into your new project

cd cl-digest-bot

# Let's see what we've got

ls -la

What just happened? We created a modern Next.js application with:

- TypeScript for type safety (fewer bugs, better developer experience)

- Tailwind CSS for styling (rapid UI development)

- App Router (Next.js 13+ modern routing)

- Import aliases (

@/instead of../../..)

📦 Essential Dependencies: Your AI Toolkit

Now let's install the packages that will power our system. Each one serves a specific purpose:

# AI and Language Models

npm install @ai-sdk/openai @ai-sdk/anthropic ai dotenv

# Database and Backend

npm install @supabase/supabase-js @supabase/auth-helpers-nextjs

# Social Media APIs

npm install twitter-api-v2 @slack/web-api googleapis

# Web Scraping and Data Processing

npm install jsdom fast-xml-parser got@11.8.6 node-fetch@2

# Utilities and Logging

npm install winston cli-progress date-fns uuid zod

# Development Dependencies

npm install --save-dev @types/jsdom @types/node-fetch @types/uuid ts-node

Why these specific packages?

🤖 AI Integration:

@ai-sdk/openai&@ai-sdk/anthropic: Vercel's AI SDK for seamless model integrationai: Unified interface for different AI providers

🗄️ Data Layer:

@supabase/supabase-js: PostgreSQL database with real-time features- Supabase gives us authentication, real-time subscriptions, and edge functions

📱 Social APIs:

twitter-api-v2: Most robust Twitter API client@slack/web-api: Official Slack SDKgoogleapis: YouTube and other Google services

🕷️ Web Scraping:

jsdom: Parse HTML like a browserfast-xml-parser: Handle RSS feeds efficientlygot: HTTP client with advanced features

🛠️ Developer Experience:

winston: Professional logging with multiple transportscli-progress: Visual feedback for long operationszod: Runtime type validation

🏗️ Project Structure: Organizing for Scale

Let's set up a directory structure that will scale with our project:

# Create our core directories

mkdir -p lib/{ai,x-api,telegram,rss,slack,supabase,logger} types config utils scripts/{db,fetch,digest,test} docs

Your project should now look like this:

cl-digest-bot/

├── app/ # Next.js app directory

├── lib/ # Core business logic

│ ├── ai/ # AI service integrations

│ ├── x-api/ # Twitter/X API client

│ ├── telegram/ # Telegram scraping

│ ├── rss/ # RSS feed processing

│ ├── slack/ # Slack integration

│ ├── supabase/ # Database layer

│ └── logger/ # Logging utilities

├── types/ # TypeScript type definitions

├── config/ # Configuration management

├── utils/ # Shared utility functions

├── scripts/ # CLI tools and automation

│ ├── db/ # Database operations

│ ├── fetch/ # Data collection scripts

│ ├── digest/ # Content processing

│ └── test/ # Testing utilities

└── docs/ # Documentation

Note: this structure may change with the build, but for now this gives you an idea of how things will be organized.

⚙️ TypeScript Configuration: Type Safety First

Let's configure TypeScript for both our main app and our scripts. First, update your main tsconfig.json:

{

"compilerOptions": {

"lib": ["dom", "dom.iterable", "es2022"],

"allowJs": true,

"skipLibCheck": true,

"strict": true,

"noEmit": true,

"esModuleInterop": true,

"module": "esnext",

"moduleResolution": "bundler",

"resolveJsonModule": true,

"isolatedModules": true,

"jsx": "preserve",

"incremental": true,

"plugins": [

{

"name": "next"

}

],

"baseUrl": ".",

"paths": {

"@/*": ["./src/*"],

"@/lib/*": ["./lib/*"],

"@/types/*": ["./types/*"],

"@/config/*": ["./config/*"],

"@/utils/*": ["./utils/*"]

}

},

"include": ["next-env.d.ts", "**/*.ts", "**/*.tsx", ".next/types/**/*.ts"],

"exclude": ["node_modules", "scripts"]

}

Now create a separate TypeScript config for our scripts:

# Create scripts TypeScript config

cat > scripts/tsconfig.json << 'EOF'

{

"extends": "../tsconfig.json",

"compilerOptions": {

"module": "commonjs",

"moduleResolution": "node",

"target": "es2020",

"noEmit": false,

"outDir": "./dist",

"rootDir": "../",

"baseUrl": "../",

"paths": {

"@/*": ["./src/*"],

"@/lib/*": ["./lib/*"],

"@/types/*": ["./types/*"],

"@/config/*": ["./config/*"],

"@/utils/*": ["./utils/*"]

}

},

"include": [

"../lib/**/*",

"../types/**/*",

"../config/**/*",

"../utils/**/*",

"./**/*"

],

"exclude": ["node_modules"]

}

EOF

🔧 Package.json Scripts: Your Command Center

Let's add some useful scripts to our package.json. Add these to the scripts section:

{

"scripts": {

"dev": "next dev",

"build": "next build",

"start": "next start",

"lint": "next lint",

"script": "ts-node -P scripts/tsconfig.json",

"test:db": "npm run script scripts/db/test-connection.ts",

"test:digest": "npm run script scripts/digest/test-digest.ts"

}

}

🌱 Environment Setup: Keeping Secrets Safe

Create your environment file:

touch .env.local

Add this to your .env.local:

# AI Models

OPENAI_API_KEY=your_openai_api_key_here

ANTHROPIC_API_KEY=your_anthropic_api_key_here

# Database

NEXT_PUBLIC_SUPABASE_URL=your_supabase_url

NEXT_PUBLIC_SUPABASE_ANON_KEY=your_supabase_anon_key

SUPABASE_SERVICE_ROLE_KEY=your_supabase_service_key

# Social Media APIs

X_API_KEY=your_twitter_api_key

X_API_SECRET=your_twitter_api_secret

SLACK_BOT_TOKEN=your_slack_bot_token

SLACK_CHANNEL_ID=your_slack_channel_id

# Development

NODE_ENV=development

🧪 Testing Your Setup

Let's create a simple test to verify everything is working:

// scripts/test/test-setup.ts

import { config } from 'dotenv';

// Load environment variables

config({ path: '.env.local' });

console.log('🚀 Testing CL Digest Bot Setup...\n');

// Test environment variables

const requiredEnvVars = [

'OPENAI_API_KEY',

'NEXT_PUBLIC_SUPABASE_URL'

];

let allGood = true;

for (const envVar of requiredEnvVars) {

if (process.env[envVar]) {

console.log(`✅ ${envVar}: Configured`);

} else {

console.log(`❌ ${envVar}: Missing`);

allGood = false;

}

}

// Test TypeScript compilation

try {

const testData: { message: string; success: boolean } = {

message: "TypeScript is working!",

success: true

};

console.log(`✅ TypeScript: ${testData.message}`);

} catch (error) {

console.log(`❌ TypeScript: Error`);

allGood = false;

}

console.log('\n' + (allGood ? '🎉 Setup complete! Ready to build.' : '🔧 Please fix the issues above.'));

Run the test:

npm run script scripts/test/test-setup.ts

🎯 What We've Accomplished

Congratulations! You've just built the foundation for a sophisticated AI system. Here's what we've set up:

✅ Modern Next.js application with TypeScript and Tailwind

✅ Comprehensive dependency management for AI, databases, and APIs

✅ Scalable project structure organized by domain

✅ Dual TypeScript configuration for app and scripts

✅ Environment management with security best practices

✅ Testing infrastructure to verify setup

🔍 Pro Tips & Common Pitfalls

💡 Pro Tip: Always use specific versions for AI SDKs. The AI space moves fast, and breaking changes are common.

⚠️ Common Pitfall: Don't commit your .env.local file! Add it to .gitignore immediately.

🔧 Debugging: If ts-node gives you import errors, make sure your scripts/tsconfig.json includes the right paths.

📋 Complete Code Summary - Chapter 1

Here's your complete project structure after Chapter 1:

# Project creation and setup

npx create-next-app@latest cl-digest-bot --typescript --tailwind --eslint --app --src-dir --import-alias "@/*"

cd cl-digest-bot

# Install all dependencies

npm install @ai-sdk/openai @ai-sdk/anthropic ai @supabase/supabase-js @supabase/auth-helpers-nextjs twitter-api-v2 @slack/web-api googleapis jsdom fast-xml-parser got@11.8.6 node-fetch@2 winston cli-progress date-fns uuid zod

npm install --save-dev @types/jsdom @types/node-fetch @types/uuid ts-node

# Create directory structure

mkdir -p {lib/{ai,x-api,telegram,rss,slack,supabase,logger},types,config,utils,scripts/{db,fetch,digest,test}}

# Your package.json scripts section should include:

{

"scripts": {

"dev": "next dev",

"build": "next build",

"start": "next start",

"lint": "next lint",

"script": "ts-node -P scripts/tsconfig.json",

"test:db": "npm run script scripts/db/test-connection.ts",

"test:digest": "npm run script scripts/digest/test-digest.ts"

}

}

🍾 Chapter 1 Complete

You can compare your code to the completed Chapter 1 code here.

Next up: In Chapter 2, we'll set up our Supabase database, create our core data models, and build the logging system that will track our system's every move. Get ready to dive into the data layer!

Ready to continue? In the next chapter, we'll create the database schema and logging infrastructure that will power our entire system. The real magic is about to begin! 🚀

Chapter 2

Building Your Data Foundation - Database & Core Structure

"Data is the new oil, but like oil, it's only valuable when refined." - Clive Humby

Now that we have our development environment set up, it's time to build the backbone of our system: the database layer and core data structures. Think of this chapter as constructing the foundation and plumbing for a skyscraper—not the most glamorous work, but absolutely critical for everything that follows.

In this chapter, we'll create a robust data layer that can handle thousands of tweets, Telegram messages, and RSS articles while maintaining lightning-fast query performance. We'll also build a professional logging system that will be your best friend when debugging issues at 2 AM.

🗄️ Setting Up Supabase: Your PostgreSQL Powerhouse

Why Supabase Over Other Solutions?

Before we dive in, let's talk about why we chose Supabase:

- PostgreSQL under the hood: Real SQL, not a NoSQL compromise

- Real-time subscriptions: Watch data change live

- Built-in authentication: User management without the headache

- Edge functions: Serverless functions that scale

- Generous free tier: Perfect for development and small projects

Creating Your Supabase Project

-

Sign up at supabase.com

-

Create a new project:

- Name:

cl-digest-bot - Database password: Generate a strong one (save it!)

- Region: Choose the closest to your users

- Name:

-

Grab your credentials from the project settings:

- Project URL

- Anon public key

- Service role key (keep this secret!)

-

Update your

.env.local:

# Add these to your existing .env.local

NEXT_PUBLIC_SUPABASE_URL=https://your-project.supabase.co

NEXT_PUBLIC_SUPABASE_ANON_KEY=your_anon_key_here

SUPABASE_SERVICE_ROLE_KEY=your_service_role_key_here

🏗️ Database Schema: Designing for Scale

Let's create our database tables. We need to store:

- Tweets with engagement metrics

- Telegram messages from various channels

- RSS articles with metadata

- Generated digests and their configurations

- Source accounts for each platform

Create this file to define our schema:

-- scripts/db/schema.sql

-- Enable UUID extension

CREATE EXTENSION IF NOT EXISTS "uuid-ossp";

-- Sources table: Track all our data sources

CREATE TABLE sources (

id UUID PRIMARY KEY DEFAULT uuid_generate_v4(),

name VARCHAR(255) NOT NULL,

type VARCHAR(50) NOT NULL CHECK (type IN ('twitter', 'telegram', 'rss')),

url VARCHAR(500),

username VARCHAR(255),

is_active BOOLEAN DEFAULT true,

config JSONB DEFAULT '{}',

created_at TIMESTAMP WITH TIME ZONE DEFAULT NOW(),

updated_at TIMESTAMP WITH TIME ZONE DEFAULT NOW()

);

-- Tweets table: Store Twitter/X data with engagement metrics

CREATE TABLE tweets (

id VARCHAR(255) PRIMARY KEY, -- Twitter's tweet ID

text TEXT NOT NULL,

author_id VARCHAR(255) NOT NULL,

author_username VARCHAR(255) NOT NULL,

author_name VARCHAR(255),

created_at TIMESTAMP WITH TIME ZONE NOT NULL,

-- Engagement metrics

retweet_count INTEGER DEFAULT 0,

like_count INTEGER DEFAULT 0,

reply_count INTEGER DEFAULT 0,

quote_count INTEGER DEFAULT 0,

-- Our computed fields

engagement_score INTEGER DEFAULT 0,

quality_score FLOAT DEFAULT 0,

-- Metadata

source_url VARCHAR(500),

raw_data JSONB,

processed_at TIMESTAMP WITH TIME ZONE DEFAULT NOW(),

-- Indexes for performance

CONSTRAINT tweets_engagement_score_check CHECK (engagement_score >= 0)

);

-- Telegram messages table

CREATE TABLE telegram_messages (

id UUID PRIMARY KEY DEFAULT uuid_generate_v4(),

message_id VARCHAR(255) NOT NULL,

channel_username VARCHAR(255) NOT NULL,

channel_title VARCHAR(255),

text TEXT NOT NULL,

author VARCHAR(255),

message_date TIMESTAMP WITH TIME ZONE NOT NULL,

-- Message metadata

views INTEGER DEFAULT 0,

forwards INTEGER DEFAULT 0,

replies INTEGER DEFAULT 0,

-- Our processing

quality_score FLOAT DEFAULT 0,

source_url VARCHAR(500),

raw_data JSONB,

fetched_at TIMESTAMP WITH TIME ZONE DEFAULT NOW(),

-- Unique constraint to prevent duplicates

UNIQUE(message_id, channel_username)

);

-- RSS articles table

CREATE TABLE rss_articles (

id UUID PRIMARY KEY DEFAULT uuid_generate_v4(),

title VARCHAR(500) NOT NULL,

link VARCHAR(500) NOT NULL UNIQUE,

description TEXT,

content TEXT,

author VARCHAR(255),

published_at TIMESTAMP WITH TIME ZONE,

-- Source information

feed_url VARCHAR(500) NOT NULL,

feed_title VARCHAR(255),

-- Our processing

quality_score FLOAT DEFAULT 0,

word_count INTEGER DEFAULT 0,

raw_data JSONB,

fetched_at TIMESTAMP WITH TIME ZONE DEFAULT NOW()

);

-- Digests table: Store generated summaries

CREATE TABLE digests (

id UUID PRIMARY KEY DEFAULT uuid_generate_v4(),

title VARCHAR(500) NOT NULL,

summary TEXT NOT NULL,

content JSONB NOT NULL, -- Structured digest data

-- Generation metadata

ai_model VARCHAR(100),

ai_provider VARCHAR(50),

token_usage JSONB,

-- Source data window

data_from TIMESTAMP WITH TIME ZONE NOT NULL,

data_to TIMESTAMP WITH TIME ZONE NOT NULL,

-- Publishing

published_to_slack BOOLEAN DEFAULT false,

slack_message_ts VARCHAR(255),

created_at TIMESTAMP WITH TIME ZONE DEFAULT NOW(),

updated_at TIMESTAMP WITH TIME ZONE DEFAULT NOW()

);

-- Create indexes for better query performance

CREATE INDEX idx_tweets_created_at ON tweets(created_at DESC);

CREATE INDEX idx_tweets_author_username ON tweets(author_username);

CREATE INDEX idx_tweets_engagement_score ON tweets(engagement_score DESC);

CREATE INDEX idx_telegram_messages_channel ON telegram_messages(channel_username);

CREATE INDEX idx_telegram_messages_date ON telegram_messages(message_date DESC);

CREATE INDEX idx_rss_articles_published ON rss_articles(published_at DESC);

CREATE INDEX idx_rss_articles_feed ON rss_articles(feed_url);

CREATE INDEX idx_digests_created ON digests(created_at DESC);

-- Create updated_at trigger function

CREATE OR REPLACE FUNCTION update_updated_at_column()

RETURNS TRIGGER AS $$

BEGIN

NEW.updated_at = NOW();

RETURN NEW;

END;

$$ language 'plpgsql';

-- Apply the trigger to tables that need it

CREATE TRIGGER update_sources_updated_at BEFORE UPDATE ON sources

FOR EACH ROW EXECUTE FUNCTION update_updated_at_column();

CREATE TRIGGER update_digests_updated_at BEFORE UPDATE ON digests

FOR EACH ROW EXECUTE FUNCTION update_updated_at_column();

🔧 Setting Up the Database

Now let's create a script to check and initialize our database. Due to Supabase's architecture, the most reliable way to set up tables is through their SQL Editor, but we'll create a helpful script that guides you through the process:

// scripts/db/init-db.ts

import { createClient } from '@supabase/supabase-js';

import { readFileSync } from 'fs';

import { join } from 'path';

import { config } from 'dotenv';

// Load environment variables

config({ path: '.env.local' });

const supabaseUrl = process.env.NEXT_PUBLIC_SUPABASE_URL!;

const supabaseServiceKey = process.env.SUPABASE_SERVICE_ROLE_KEY!;

if (!supabaseUrl || !supabaseServiceKey) {

console.error('❌ Missing Supabase credentials in environment variables');

console.log('\nPlease create .env.local with:');

console.log('NEXT_PUBLIC_SUPABASE_URL=https://your-project.supabase.co');

console.log('NEXT_PUBLIC_SUPABASE_ANON_KEY=your_anon_key');

console.log('SUPABASE_SERVICE_ROLE_KEY=your_service_key');

process.exit(1);

}

const supabase = createClient(supabaseUrl, supabaseServiceKey);

async function main() {

console.log('🚀 Supabase Database Setup Tool\n');

// Check which tables exist

const expectedTables = ['sources', 'tweets', 'telegram_messages', 'rss_articles', 'digests'];

const existingTables: string[] = [];

const missingTables: string[] = [];

console.log('🔍 Checking for existing tables...\n');

for (const tableName of expectedTables) {

try {

console.log(`Checking ${tableName}...`);

const { error } = await supabase

.from(tableName)

.select('*')

.limit(0);

if (!error) {

existingTables.push(tableName);

console.log(` ✅ ${tableName} exists`);

} else {

missingTables.push(tableName);

console.log(` ❌ ${tableName} missing`);

}

} catch (err) {

missingTables.push(tableName);

console.log(` ❌ ${tableName} missing (connection error)`);

}

}

console.log('\n📊 Database Status:');

console.log(` ✅ Existing tables: ${existingTables.length}/${expectedTables.length}`);

console.log(` ❌ Missing tables: ${missingTables.length}`);

if (missingTables.length === 0) {

console.log('\n🎉 All tables exist! Your database is ready.');

// Quick test

try {

const { data, error } = await supabase

.from('sources')

.select('count');

if (!error) {

console.log('✅ Database operations are working correctly');

}

} catch (err) {

console.log('⚠️ Tables exist but there might be permission issues');

}

return;

}

// Show setup instructions

console.log('\n🔧 Setup Required!');

console.log('\nTo create the missing tables:');

console.log('\n📝 Method 1 - Supabase Dashboard (Recommended):');

console.log(' 1. Go to https://supabase.com/dashboard');

console.log(' 2. Select your project');

console.log(' 3. Click "SQL Editor" in the left sidebar');

console.log(' 4. Copy the SQL below and paste it');

console.log(' 5. Click "Run"');

console.log('\n📄 SQL to copy and paste:');

console.log('=' + '='.repeat(80));

try {

const schemaPath = join(__dirname, 'schema.sql');

const schema = readFileSync(schemaPath, 'utf-8');

console.log(schema);

} catch (err) {

console.log('❌ Could not read schema.sql file');

console.log('Make sure scripts/db/schema.sql exists');

}

console.log('=' + '='.repeat(80));

console.log('\n✅ After running the SQL, run this script again to verify!');

}

main().catch((error) => {

console.error('\n❌ Script failed:', error.message);

console.log('\nTroubleshooting:');

console.log('1. Check your .env.local file has valid Supabase credentials');

console.log('2. Verify your Supabase project is active');

console.log('3. Make sure your service role key is correct');

process.exit(1);

});

🚀 Database Setup Process

Step 1: Run the setup script to check your database:

npm run script scripts/db/init-db.ts

Step 2: If tables are missing, use the Supabase SQL Editor:

- Go to your Supabase dashboard at supabase.com/dashboard

- Select your project

- Click "SQL Editor" in the left sidebar

- Copy the SQL output from the script above

- Paste it in the SQL Editor and click "Run"

Step 3: Verify the setup:

npm run script scripts/db/init-db.ts

You should see: 🎉 All tables exist! Your database is ready.

💡 Why This Approach?

Supabase's architecture makes direct SQL execution through their JavaScript client challenging for schema creation. The most reliable approach is:

- ✅ SQL Editor: Direct database access with full permissions

- ✅ Verification Script: Ensures everything is set up correctly

- ✅ Error-free: No complex connection handling or permission issues

- ✅ Reproducible: Easy to re-run and verify

📝 TypeScript Types: Type Safety for Your Data

Now let's create TypeScript interfaces that match our database schema. This gives us compile-time safety and excellent IDE support:

// types/database.ts

export interface Database {

public: {

Tables: {

sources: {

Row: Source;

Insert: Omit<Source, 'id' | 'created_at' | 'updated_at'> & {

id?: string;

created_at?: string;

updated_at?: string;

};

Update: Partial<Source>;

};

tweets: {

Row: Tweet;

Insert: Omit<Tweet, 'processed_at'> & {

processed_at?: string;

};

Update: Partial<Tweet>;

};

telegram_messages: {

Row: TelegramMessage;

Insert: Omit<TelegramMessage, 'id' | 'fetched_at'> & {

id?: string;

fetched_at?: string;

};

Update: Partial<TelegramMessage>;

};

rss_articles: {

Row: RSSArticle;

Insert: Omit<RSSArticle, 'id' | 'fetched_at'> & {

id?: string;

fetched_at?: string;

};

Update: Partial<RSSArticle>;

};

digests: {

Row: Digest;

Insert: Omit<Digest, 'id' | 'created_at' | 'updated_at'> & {

id?: string;

created_at?: string;

updated_at?: string;

};

Update: Partial<Digest>;

};

};

};

}

// Individual table types

export interface Source {

id: string;

name: string;

type: 'twitter' | 'telegram' | 'rss';

url?: string;

username?: string;

is_active: boolean;

config: Record<string, any>;

created_at: string;

updated_at: string;

}

export interface Tweet {

id: string; // Twitter's tweet ID

text: string;

author_id: string;

author_username: string;

author_name?: string;

created_at: string;

// Engagement metrics

retweet_count: number;

like_count: number;

reply_count: number;

quote_count: number;

// Computed fields

engagement_score: number;

quality_score: number;

// Metadata

source_url?: string;

raw_data?: Record<string, any>;

processed_at: string;

}

export interface TelegramMessage {

id: string;

message_id: string;

channel_username: string;

channel_title?: string;

text: string;

author?: string;

message_date: string;

// Engagement

views: number;

forwards: number;

replies: number;

// Processing

quality_score: number;

source_url?: string;

raw_data?: Record<string, any>;

fetched_at: string;

}

export interface RSSArticle {

id: string;

title: string;

link: string;

description?: string;

content?: string;

author?: string;

published_at?: string;

// Source

feed_url: string;

feed_title?: string;

// Processing

quality_score: number;

word_count: number;

raw_data?: Record<string, any>;

fetched_at: string;

}

export interface Digest {

id: string;

title: string;

summary: string;

content: DigestContent;

// AI metadata

ai_model?: string;

ai_provider?: string;

token_usage?: TokenUsage;

// Data scope

data_from: string;

data_to: string;

// Publishing

published_to_slack: boolean;

slack_message_ts?: string;

created_at: string;

updated_at: string;

}

// Supporting types

export interface DigestContent {

sections: DigestSection[];

tweets: TweetDigestItem[];

articles: ArticleDigestItem[];

telegram_messages?: TelegramDigestItem[];

metadata: {

total_sources: number;

processing_time_ms: number;

model_config: any;

};

}

export interface DigestSection {

title: string;

summary: string;

key_points: string[];

source_count: number;

}

export interface TweetDigestItem {

id: string;

text: string;

author: string;

url: string;

engagement: {

likes: number;

retweets: number;

replies: number;

};

relevance_score: number;

}

export interface ArticleDigestItem {

title: string;

summary: string;

url: string;

source: string;

published_at: string;

relevance_score: number;

}

export interface TelegramDigestItem {

text: string;

channel: string;

author?: string;

url: string;

date: string;

relevance_score: number;

}

export interface TokenUsage {

prompt_tokens: number;

completion_tokens: number;

total_tokens: number;

reasoning_tokens?: number;

}

🔌 Supabase Client Setup

Let's create our database client with proper configuration:

// lib/supabase/supabase-client.ts

import { createClient } from '@supabase/supabase-js';

import type { Database } from '../../types/database';

const supabaseUrl = process.env.NEXT_PUBLIC_SUPABASE_URL;

const supabaseAnonKey = process.env.NEXT_PUBLIC_SUPABASE_ANON_KEY;

if (!supabaseUrl || !supabaseAnonKey) {

throw new Error('Missing Supabase environment variables');

}

// Create the client with proper typing

export const supabase = createClient<Database>(

supabaseUrl,

supabaseAnonKey,

{

auth: {

persistSession: false, // We're not using auth for now

},

db: {

schema: 'public',

},

global: {

headers: {

'X-Client-Info': 'cl-digest-bot',

},

},

}

);

// Utility function to check connection

export async function testConnection(): Promise<boolean> {

try {

const { data, error } = await supabase

.from('sources')

.select('count')

.limit(1);

return !error;

} catch (error) {

console.error('Database connection failed:', error);

return false;

}

}

📊 Professional Logging: Your Debugging Superpower

Now let's create a robust logging system using Winston. This will be invaluable for monitoring our system in production:

// lib/logger/index.ts

import winston from 'winston';

import { join } from 'path';

// Define log levels and colors

const logLevels = {

error: 0,

warn: 1,

info: 2,

http: 3,

debug: 4,

};

const logColors = {

error: 'red',

warn: 'yellow',

info: 'green',

http: 'magenta',

debug: 'white',

};

// Tell winston about our colors

winston.addColors(logColors);

// Custom format for console output

const consoleFormat = winston.format.combine(

winston.format.timestamp({ format: 'YYYY-MM-DD HH:mm:ss' }),

winston.format.colorize({ all: true }),

winston.format.printf(

(info) => `${info.timestamp} ${info.level}: ${info.message}`

)

);

// Format for file output (JSON for easier parsing)

const fileFormat = winston.format.combine(

winston.format.timestamp(),

winston.format.errors({ stack: true }),

winston.format.json()

);

// Create the logger

const logger = winston.createLogger({

level: process.env.NODE_ENV === 'development' ? 'debug' : 'info',

levels: logLevels,

format: fileFormat,

defaultMeta: { service: 'cl-digest-bot' },

transports: [

// Write all logs with importance level of 'error' or less to error.log

new winston.transports.File({

filename: join(process.cwd(), 'logs', 'error.log'),

level: 'error',

}),

// Write all logs to combined.log

new winston.transports.File({

filename: join(process.cwd(), 'logs', 'combined.log'),

}),

],

});

// Add console output for development

if (process.env.NODE_ENV !== 'production') {

logger.add(

new winston.transports.Console({

format: consoleFormat,

})

);

}

// Create logs directory if it doesn't exist

import { mkdirSync } from 'fs';

try {

mkdirSync(join(process.cwd(), 'logs'), { recursive: true });

} catch (error) {

// Directory already exists, ignore

}

export default logger;

// Helper functions for common log patterns

export const logError = (message: string, error?: any, metadata?: any) => {

logger.error(message, { error: error?.message || error, stack: error?.stack, ...metadata });

};

export const logInfo = (message: string, metadata?: any) => {

logger.info(message, metadata);

};

export const logDebug = (message: string, metadata?: any) => {

logger.debug(message, metadata);

};

export const logWarning = (message: string, metadata?: any) => {

logger.warn(message, metadata);

};

📈 Progress Tracking: Visual Feedback for Long Operations

Let's create a progress tracking system that integrates with our logging:

// utils/progress.ts

import cliProgress from 'cli-progress';

import logger from '../lib/logger';

export interface ProgressConfig {

total: number;

label: string;

showPercentage?: boolean;

showETA?: boolean;

}

export class ProgressTracker {

private bar: cliProgress.SingleBar | null = null;

private startTime: number = 0;

private label: string = '';

constructor(config: ProgressConfig) {

this.label = config.label;

this.startTime = Date.now();

// Create progress bar with custom format

this.bar = new cliProgress.SingleBar({

format: `${config.label} [{bar}] {percentage}% | ETA: {eta}s | {value}/{total}`,

hideCursor: true,

barCompleteChar: '█',

barIncompleteChar: '░',

clearOnComplete: false,

stopOnComplete: true,

}, cliProgress.Presets.shades_classic);

this.bar.start(config.total, 0);

logger.info(`Started: ${config.label}`, { total: config.total });

}

update(current: number, data?: any): void {

if (this.bar) {

this.bar.update(current, data);

}

}

increment(data?: any): void {

if (this.bar) {

this.bar.increment(data);

}

}

complete(message?: string): void {

if (this.bar) {

this.bar.stop();

}

const duration = Date.now() - this.startTime;

const completionMessage = message || `Completed: ${this.label}`;

logger.info(completionMessage, {

duration_ms: duration,

duration_formatted: `${(duration / 1000).toFixed(2)}s`

});

console.log(`✅ ${completionMessage} (${(duration / 1000).toFixed(2)}s)`);

}

fail(error: string): void {

if (this.bar) {

this.bar.stop();

}

const duration = Date.now() - this.startTime;

logger.error(`Failed: ${this.label}`, { error, duration_ms: duration });

console.log(`❌ Failed: ${this.label} - ${error}`);

}

}

// Progress manager for multiple concurrent operations

export class ProgressManager {

private trackers: Map<string, ProgressTracker> = new Map();

create(id: string, config: ProgressConfig): ProgressTracker {

const tracker = new ProgressTracker(config);

this.trackers.set(id, tracker);

return tracker;

}

get(id: string): ProgressTracker | undefined {

return this.trackers.get(id);

}

complete(id: string, message?: string): void {

const tracker = this.trackers.get(id);

if (tracker) {

tracker.complete(message);

this.trackers.delete(id);

}

}

fail(id: string, error: string): void {

const tracker = this.trackers.get(id);

if (tracker) {

tracker.fail(error);

this.trackers.delete(id);

}

}

cleanup(): void {

this.trackers.clear();

}

}

// Global progress manager instance

export const progressManager = new ProgressManager();

🧪 Testing Your Database Setup

Let's create a comprehensive test to verify everything is working:

// scripts/db/test-connection.ts

import { config } from 'dotenv';

import { supabase, testConnection } from '../../lib/supabase/supabase-client';

import logger, { logInfo, logError } from '../../lib/logger';

import { ProgressTracker } from '../../utils/progress';

// Load environment variables

config({ path: '.env.local' });

async function testDatabaseSetup() {

const progress = new ProgressTracker({

total: 6,

label: 'Testing Database Setup'

});

try {

// Test 1: Connection

progress.update(1, { test: 'Connection' });

const connected = await testConnection();

if (!connected) {

throw new Error('Failed to connect to database');

}

logInfo('✅ Database connection successful');

// Test 2: Tables exist

progress.update(2, { test: 'Tables' });

const expectedTables = ['sources', 'tweets', 'telegram_messages', 'rss_articles', 'digests'];

const foundTables: string[] = [];

for (const tableName of expectedTables) {

const { error } = await supabase

.from(tableName)

.select('*')

.limit(0);

if (!error) {

foundTables.push(tableName);

}

}

if (expectedTables.every(table => foundTables.includes(table))) {

logInfo('✅ All required tables exist', { tables: foundTables });

} else {

throw new Error(`Missing tables: ${expectedTables.filter(t => !foundTables.includes(t))}`);

}

// Test 3: Insert test data

progress.update(3, { test: 'Insert' });

const { data: sourceData, error: insertError } = await supabase

.from('sources')

.insert({

name: 'test_source',

type: 'twitter',

username: 'test_user',

config: { test: true }

})

.select()

.single();

if (insertError) {

throw new Error(`Insert failed: ${insertError.message}`);

}

logInfo('✅ Test insert successful', { id: sourceData.id });

// Test 4: Query test data

progress.update(4, { test: 'Query' });

const { data: queryData, error: queryError } = await supabase

.from('sources')

.select('*')

.eq('name', 'test_source')

.single();

if (queryError || !queryData) {

throw new Error(`Query failed: ${queryError?.message}`);

}

logInfo('✅ Test query successful', { name: queryData.name });

// Test 5: Update test data

progress.update(5, { test: 'Update' });

const { error: updateError } = await supabase

.from('sources')

.update({ is_active: false })

.eq('id', sourceData.id);

if (updateError) {

throw new Error(`Update failed: ${updateError.message}`);

}

logInfo('✅ Test update successful');

// Test 6: Clean up

progress.update(6, { test: 'Cleanup' });

const { error: deleteError } = await supabase

.from('sources')

.delete()

.eq('id', sourceData.id);

if (deleteError) {

throw new Error(`Cleanup failed: ${deleteError.message}`);

}

logInfo('✅ Test cleanup successful');

progress.complete('Database setup test completed successfully!');

} catch (error) {

logError('Database test failed', error);

progress.fail(error instanceof Error ? error.message : 'Unknown error');

process.exit(1);

}

}

// Run the test

testDatabaseSetup();

Run the database test:

npm run test:db

🎯 What We've Accomplished

Incredible work! You've just built the data foundation for a production-ready system:

✅ Supabase database with optimized schema design

✅ Type-safe database interfaces with full TypeScript support

✅ Professional logging system with multiple transports

✅ Progress tracking for long-running operations

✅ Comprehensive testing to verify everything works

🔍 Pro Tips & Common Pitfalls

💡 Pro Tip: Always use database indexes on columns you'll query frequently. We've added indexes for created_at, author_username, and engagement_score.

⚠️ Common Pitfall: Don't store your service role key in client-side code! It has admin privileges. Use the anon key for frontend operations.

🔧 Performance Tip: PostgreSQL's JSONB type is incredibly powerful for storing metadata while maintaining query performance.

📋 Complete Code Summary - Chapter 2

Here are all the files you should have created:

Database Schema:

# Created: scripts/db/schema.sql (database tables and indexes)

# Created: scripts/db/init-db.ts (database initialization script)

TypeScript Types:

# Created: types/database.ts (complete type definitions)

Core Infrastructure:

# Created: lib/supabase/supabase-client.ts (database client)

# Created: lib/logger/index.ts (Winston logging setup)

# Created: utils/progress.ts (progress tracking utilities)

Testing:

# Created: scripts/db/test-connection.ts (comprehensive database test)

Package.json scripts to add:

{

"scripts": {

"test:db": "npm run script scripts/db/test-connection.ts",

"init:db": "npm run script scripts/db/init-db.ts"

}

}

Your database is now ready to handle:

- 📊 Thousands of tweets with engagement metrics

- 💬 Telegram messages from multiple channels

- 📰 RSS articles with content analysis

- 🤖 AI-generated digests with metadata

- 📈 Performance monitoring through logs

🍾 Chapter 2 Complete

You can check your code with the completed Chapter 2 code here.

Next up: In Chapter 3, we'll create the centralized configuration system that makes managing multiple data sources effortless. We'll build type-safe configs that let you fine-tune everything from API rate limits to content quality thresholds—all from one place!

Ready to continue? The next chapter will show you how to build configuration that scales as your system grows. No more hunting through code to change settings! 🚀

Chapter 3

Smart Configuration - Managing Settings Like a Pro

"Complexity is the enemy of execution." - Tony Robbins

You know what separates a weekend project from a production system? Configuration management.

Picture this: You've built an amazing content aggregator, but now you need to tweak how many tweets to fetch from each account, adjust cache durations, or change quality thresholds. In most projects, you'd be hunting through dozens of files, changing hardcoded values, and hoping you didn't break anything.

We're going to do better. Much better.

In this chapter, we'll build a centralized configuration system that's so clean and intuitive, you'll wonder why every project doesn't work this way. By the end, you'll be able to configure your entire system from one place, with full TypeScript safety and zero guesswork.

🎯 What We're Building

A configuration system that:

- Centralizes all settings in one place

- Provides sensible defaults that work out of the box

- Allows per-source overrides (some Twitter accounts need different settings)

- Validates configuration at startup to catch errors early

- Scales beautifully as you add new data sources

Let's start simple and build up.

🏗️ The Foundation: Basic Types

First, let's define what each data source needs to configure:

// config/types.ts

// Twitter/X account configuration

export interface XAccountConfig {

tweetsPerRequest: number; // How many tweets to fetch per API call (5-100)

maxPages: number; // How many pages to paginate through

cacheHours: number; // Hours before refreshing cached data

minTweetLength: number; // Filter out short tweets

minEngagementScore: number; // Filter out low-engagement tweets

}

// Telegram channel configuration

export interface TelegramChannelConfig {

messagesPerChannel: number; // How many messages to fetch

cacheHours: number; // Cache duration

minMessageLength: number; // Filter short messages

}

// RSS feed configuration

export interface RssFeedConfig {

articlesPerFeed: number; // How many articles to fetch

cacheHours: number; // Cache duration

minArticleLength: number; // Filter short articles

maxArticleLength: number; // Trim very long articles

}

Why these specific settings? Each one solves a real problem:

- tweetsPerRequest: Twitter API limits, but more = fewer API calls

- cacheHours: Balance between freshness and API costs

- minEngagementScore: Quality filter - ignore tweets nobody cared about

- maxArticleLength: Prevent token overflow in AI processing

📊 The Configuration Hub

Now let's create our main configuration file. This is where the magic happens:

// config/data-sources-config.ts

import { XAccountConfig, TelegramChannelConfig, RssFeedConfig } from './types';

/**

* Twitter/X Configuration

*

* Provides defaults and per-account overrides for Twitter data collection

*/

export const xConfig = {

// Global defaults - work for 90% of accounts

defaults: {

tweetsPerRequest: 100, // Max allowed by Twitter API

maxPages: 2, // 200 tweets total per account

cacheHours: 5, // Refresh every 5 hours

minTweetLength: 50, // Skip very short tweets

minEngagementScore: 5, // Skip tweets with <5 total engagement

} as XAccountConfig,

// Special cases - accounts that need different settings

accountOverrides: {

// High-volume accounts - get more data

'elonmusk': { maxPages: 5 },

'unusual_whales': { maxPages: 5 },

// News accounts - shorter cache for breaking news

'breakingnews': { cacheHours: 2 },

// Technical accounts - allow shorter tweets (code snippets)

'dan_abramov': { minTweetLength: 20 },

} as Record<string, Partial<XAccountConfig>>

};

/**

* Telegram Configuration

*/

export const telegramConfig = {

defaults: {

messagesPerChannel: 50, // 50 messages per channel

cacheHours: 5, // Same as Twitter

minMessageLength: 30, // Skip very short messages

} as TelegramChannelConfig,

channelOverrides: {

// High-activity channels

'financial_express': { messagesPerChannel: 100 },

// News channels - fresher data

'cryptonews': { cacheHours: 3 },

} as Record<string, Partial<TelegramChannelConfig>>

};

/**

* RSS Configuration

*/

export const rssConfig = {

defaults: {

articlesPerFeed: 20, // 20 articles per feed

cacheHours: 6, // RSS updates less frequently

minArticleLength: 200, // Skip very short articles

maxArticleLength: 5000, // Trim long articles to save tokens

} as RssFeedConfig,

feedOverrides: {

// High-volume tech blogs

'https://techcrunch.com/feed/': { articlesPerFeed: 10 },

// Academic feeds - longer cache OK

'https://arxiv.org/rss/cs.AI': { cacheHours: 12 },

} as Record<string, Partial<RssFeedConfig>>

};

// Helper functions to get configuration for specific sources

export function getXAccountConfig(username: string): XAccountConfig {

const override = xConfig.accountOverrides[username] || {};

return { ...xConfig.defaults, ...override };

}

export function getTelegramChannelConfig(channelName: string): TelegramChannelConfig {

const override = telegramConfig.channelOverrides[channelName] || {};

return { ...telegramConfig.defaults, ...override };

}

export function getRssFeedConfig(feedUrl: string): RssFeedConfig {

const override = rssConfig.feedOverrides[feedUrl] || {};

return { ...rssConfig.defaults, ...override };

}

What makes this powerful?

- Sensible defaults - Works immediately without any configuration

- Easy overrides - Just add an account/channel to the overrides object

- Type safety - TypeScript catches configuration errors at compile time

- Helper functions - Simple API to get config anywhere in your code

🧪 Configuration Validation

Let's add validation to catch configuration errors early:

// config/validator.ts

import { XAccountConfig, TelegramChannelConfig, RssFeedConfig } from './types';

import { xConfig, telegramConfig, rssConfig } from './data-sources-config';

interface ValidationError {

source: string;

field: string;

value: any;

message: string;

}

export class ConfigValidator {

private errors: ValidationError[] = [];

validateXConfig(): ValidationError[] {

this.errors = [];

// Validate defaults

this.validateXAccountConfig('defaults', xConfig.defaults);

// Validate all overrides

Object.entries(xConfig.accountOverrides).forEach(([account, config]) => {

const fullConfig = { ...xConfig.defaults, ...config };

this.validateXAccountConfig(`account:${account}`, fullConfig);

});

return this.errors;

}

private validateXAccountConfig(source: string, config: XAccountConfig): void {

// Twitter API limits

if (config.tweetsPerRequest < 5 || config.tweetsPerRequest > 100) {

this.addError(source, 'tweetsPerRequest', config.tweetsPerRequest,

'Must be between 5 and 100 (Twitter API limit)');

}

// Reasonable pagination limits

if (config.maxPages < 1 || config.maxPages > 10) {

this.addError(source, 'maxPages', config.maxPages,

'Must be between 1 and 10 (avoid excessive API calls)');

}

// Cache duration sanity check

if (config.cacheHours < 1 || config.cacheHours > 24) {

this.addError(source, 'cacheHours', config.cacheHours,

'Must be between 1 and 24 hours');

}

// Text length validation

if (config.minTweetLength < 1 || config.minTweetLength > 280) {

this.addError(source, 'minTweetLength', config.minTweetLength,

'Must be between 1 and 280 characters');

}

}

validateTelegramConfig(): ValidationError[] {

this.errors = [];

// Validate defaults

this.validateTelegramChannelConfig('defaults', telegramConfig.defaults);

// Validate overrides

Object.entries(telegramConfig.channelOverrides).forEach(([channel, config]) => {

const fullConfig = { ...telegramConfig.defaults, ...config };

this.validateTelegramChannelConfig(`channel:${channel}`, fullConfig);

});

return this.errors;

}

private validateTelegramChannelConfig(source: string, config: TelegramChannelConfig): void {

if (config.messagesPerChannel < 1 || config.messagesPerChannel > 500) {

this.addError(source, 'messagesPerChannel', config.messagesPerChannel,

'Must be between 1 and 500');

}

if (config.cacheHours < 1 || config.cacheHours > 24) {

this.addError(source, 'cacheHours', config.cacheHours,

'Must be between 1 and 24 hours');

}

}

validateRssConfig(): ValidationError[] {

this.errors = [];

this.validateRssFeedConfig('defaults', rssConfig.defaults);

Object.entries(rssConfig.feedOverrides).forEach(([feed, config]) => {

const fullConfig = { ...rssConfig.defaults, ...config };

this.validateRssFeedConfig(`feed:${feed}`, fullConfig);

});

return this.errors;

}

private validateRssFeedConfig(source: string, config: RssFeedConfig): void {

if (config.articlesPerFeed < 1 || config.articlesPerFeed > 100) {

this.addError(source, 'articlesPerFeed', config.articlesPerFeed,

'Must be between 1 and 100');

}

if (config.maxArticleLength <= config.minArticleLength) {

this.addError(source, 'maxArticleLength', config.maxArticleLength,

'Must be greater than minArticleLength');

}

}

private addError(source: string, field: string, value: any, message: string): void {

this.errors.push({ source, field, value, message });

}

// Validate all configurations

validateAll(): ValidationError[] {

const allErrors = [

...this.validateXConfig(),

...this.validateTelegramConfig(),

...this.validateRssConfig()

];

return allErrors;

}

}

// Export a singleton validator

export const configValidator = new ConfigValidator();

🔧 Environment-Based Configuration

Let's add environment-specific settings for development vs production:

// config/environment.ts

export interface EnvironmentConfig {

development: boolean;

apiTimeouts: {

twitter: number;

telegram: number;

rss: number;

};

logging: {

level: string;

enableConsole: boolean;

};

rateLimit: {

respectLimits: boolean;

bufferTimeMs: number;

};

}

function getEnvironmentConfig(): EnvironmentConfig {

const isDev = process.env.NODE_ENV === 'development';

return {

development: isDev,

apiTimeouts: {

twitter: isDev ? 10000 : 30000, // Shorter timeouts in dev

telegram: isDev ? 15000 : 45000,

rss: isDev ? 5000 : 15000,

},

logging: {

level: isDev ? 'debug' : 'info',

enableConsole: isDev,

},

rateLimit: {

respectLimits: true,

bufferTimeMs: isDev ? 1000 : 5000, // Less aggressive in dev

}

};

}

export const envConfig = getEnvironmentConfig();

🧪 Testing Your Configuration

Let's create a test to verify our configuration is working correctly:

// scripts/test/test-config.ts

import { config } from 'dotenv';

config({ path: '.env.local' });

import {

getXAccountConfig,

getTelegramChannelConfig,

getRssFeedConfig

} from '../../config/data-sources-config';

import { configValidator } from '../../config/validator';

import { envConfig } from '../../config/environment';

import logger from '../../lib/logger';

async function testConfiguration() {

console.log('🔧 Testing Configuration System...\n');

// Test 1: Default configurations

console.log('1. Testing Default Configurations:');

const defaultXConfig = getXAccountConfig('random_user');

console.log(`✅ X defaults: ${defaultXConfig.tweetsPerRequest} tweets, ${defaultXConfig.cacheHours}h cache`);

const defaultTelegramConfig = getTelegramChannelConfig('random_channel');

console.log(`✅ Telegram defaults: ${defaultTelegramConfig.messagesPerChannel} messages, ${defaultTelegramConfig.cacheHours}h cache`);

const defaultRssConfig = getRssFeedConfig('https://example.com/feed.xml');

console.log(`✅ RSS defaults: ${defaultRssConfig.articlesPerFeed} articles, ${defaultRssConfig.cacheHours}h cache`);

// Test 2: Override configurations

console.log('\n2. Testing Override Configurations:');

const elonConfig = getXAccountConfig('elonmusk');

console.log(`✅ Elon override: ${elonConfig.maxPages} pages (default is 2)`);

const newsConfig = getXAccountConfig('breakingnews');

console.log(`✅ Breaking news override: ${newsConfig.cacheHours}h cache (default is 5)`);

// Test 3: Validation

console.log('\n3. Testing Configuration Validation:');

const validationErrors = configValidator.validateAll();

if (validationErrors.length === 0) {

console.log('✅ All configurations are valid');

} else {

console.log('❌ Configuration errors found:');

validationErrors.forEach(error => {

console.log(` - ${error.source}.${error.field}: ${error.message}`);

});

}

// Test 4: Environment configuration

console.log('\n4. Testing Environment Configuration:');

console.log(`✅ Environment: ${envConfig.development ? 'Development' : 'Production'}`);

console.log(`✅ Twitter timeout: ${envConfig.apiTimeouts.twitter}ms`);

console.log(`✅ Log level: ${envConfig.logging.level}`);

// Test 5: Type safety demonstration

console.log('\n5. Demonstrating Type Safety:');

// This would cause a TypeScript error:

// const badConfig = getXAccountConfig('test');

// badConfig.invalidProperty = 'error'; // ← TypeScript catches this!

console.log('✅ TypeScript prevents invalid configuration properties');

console.log('\n🎉 Configuration system test completed successfully!');

}

// Run the test

testConfiguration().catch(error => {

logger.error('Configuration test failed', error);

process.exit(1);

});

📝 Using Configuration in Your Code

Here's how simple it is to use configuration throughout your application:

// Example: Using configuration in a Twitter client

import { getXAccountConfig } from '../config/data-sources-config';

export class TwitterClient {

async fetchTweets(username: string) {

// Get configuration for this specific account

const config = getXAccountConfig(username);

console.log(`Fetching ${config.tweetsPerRequest} tweets from @${username}`);

console.log(`Will paginate through ${config.maxPages} pages`);

console.log(`Cache expires in ${config.cacheHours} hours`);

// Use the configuration values

const tweets = await this.api.getUserTweets(username, {

max_results: config.tweetsPerRequest,

// ... other Twitter API parameters

});

// Filter based on configuration

return tweets.filter(tweet =>

tweet.text.length >= config.minTweetLength &&

this.calculateEngagement(tweet) >= config.minEngagementScore

);

}

}

Package.json script to add:

{

"scripts": {

"test:config": "npm run script scripts/test/test-config.ts"

}

}

Run your configuration test:

npm run test:config

🎯 What We've Accomplished

You've just built a configuration system that's both simple and powerful:

✅ Centralized configuration - One file to rule them all

✅ Smart defaults - Works out of the box

✅ Flexible overrides - Customize per source without complexity

✅ Type safety - Catch errors at compile time

✅ Validation - Prevent invalid configurations

✅ Environment awareness - Different settings for dev/prod

🔍 Pro Tips & Common Pitfalls

💡 Pro Tip: Start with generous defaults, then optimize. It's easier to lower limits than explain why your system is too aggressive.

⚠️ Common Pitfall: Don't over-configure. If 90% of sources use the same setting, make it the default.

🔧 Performance Tip: Cache configuration lookups if you're calling them frequently. Our helper functions are already optimized.

📋 Complete Code Summary - Chapter 3

Here are all the files you should create:

Configuration Types:

// config/types.ts

export interface XAccountConfig {

tweetsPerRequest: number;

maxPages: number;

cacheHours: number;

minTweetLength: number;

minEngagementScore: number;

}

// ... (other interfaces)

Main Configuration:

// config/data-sources-config.ts

export const xConfig = {

defaults: { /* ... */ },

accountOverrides: { /* ... */ }

};

// ... (helper functions)

Validation System:

// config/validator.ts

export class ConfigValidator {

validateAll(): ValidationError[] { /* ... */ }

}

Environment Config:

// config/environment.ts

export const envConfig = getEnvironmentConfig();

Testing:

// scripts/test/test-config.ts

// Complete configuration testing suite

🍾 Chapter 3 Complete

Cross-reference your code with the source code here.

Next up: In Chapter 4, we dive into the exciting world of web scraping! We'll build our Twitter API client with intelligent rate limiting, content filtering, and engagement analysis. Get ready to tap into the social media firehose! 🚀

Ready to start collecting data? The next chapter will show you how to build a robust Twitter scraping system that respects API limits while maximizing data quality. The real fun begins now! 🐦

Chapter 4

Tapping Into the Twitter Firehose - Smart Social Media Collection

"The best way to find out if you can trust somebody is to trust them." - Ernest Hemingway

Here's where things get exciting! We're about to tap into one of the world's largest real-time information streams. Twitter (now X) processes over 500 million tweets daily - that's a treasure trove of breaking news, market sentiment, and trending topics.

But here's the reality check: Twitter's API isn't free. Their pricing can add up quickly, especially when you're experimenting and learning.

💰 Twitter API: To Pay or Not to Pay?

Twitter API Pricing (as of 2024):

- Free tier: 1,500 tweets/month (severely limited)

- Basic tier: $100/month for 10,000 tweets

- Pro tier: $5,000/month for 1M tweets

🤔 Should You Skip Twitter Integration?

Skip Twitter if:

- You're just learning and don't want recurring costs

- You have other data sources (Telegram, RSS) that meet your needs

- You want to focus on AI processing rather than data collection

Include Twitter if:

- You need real-time social sentiment

- You're building for a business that can justify the cost

- You want to learn professional API integration patterns

🚀 Option 1: Skip Twitter and Jump Ahead

If you want to skip Twitter integration, here's what to do:

- Skip to Chapter 5 (Telegram) - Free data source with rich content

- Update your configuration to disable Twitter:

// config/data-sources-config.ts

export const systemConfig = {

enabledSources: {

twitter: false, // ← Set this to false

telegram: true, // Free alternative

rss: true // Also free

}

};

- Mock Twitter data for testing (we'll show you how)

- Come back later when you're ready to add Twitter

🐦 Option 2: Build the Full Twitter Integration

If you're ready to invest in Twitter's API, let's build something amazing! We'll create a robust Twitter client that:

- Respects rate limits (avoid getting blocked)

- Caches intelligently (minimize API costs)

- Filters for quality (ignore noise, focus on signal)

- Handles errors gracefully (API failures happen)

🔑 Setting Up Twitter API Credentials

-

Visit developer.twitter.com

-

Apply for API access (they'll ask about your use case)

-

Create a new app and note down:

- API Key

- API Secret Key

- Bearer Token

-

Add to your

.env.local:

# Twitter/X API Credentials

X_API_KEY=your_api_key_here

X_API_SECRET=your_api_secret_here

X_BEARER_TOKEN=your_bearer_token_here

📊 Twitter Data Types

Let's define what data we'll collect and how we'll structure it:

// types/twitter.ts

export interface TwitterUser {

id: string;

username: string;

name: string;

description?: string;

verified: boolean;

followers_count: number;

following_count: number;

}

export interface TwitterTweet {

id: string;

text: string;

author_id: string;

created_at: string;

// Engagement metrics

public_metrics: {

retweet_count: number;

like_count: number;

reply_count: number;

quote_count: number;

};

// Content analysis

entities?: {

urls?: Array<{ expanded_url: string; title?: string }>;

hashtags?: Array<{ tag: string }>;

mentions?: Array<{ username: string }>;

};

// Context

context_annotations?: Array<{

domain: { name: string };

entity: { name: string };

}>;

}

export interface TweetWithEngagement extends TwitterTweet {

author_username: string;

author_name: string;

engagement_score: number;

quality_score: number;

processed_at: string;

}

🚀 Building the Twitter API Client

Now let's build our Twitter client with all the production-ready features:

// lib/twitter/twitter-client.ts

import { TwitterApi, TwitterApiReadOnly, TweetV2, UserV2 } from 'twitter-api-v2';

import { TwitterTweet, TwitterUser, TweetWithEngagement } from '../../types/twitter';

import { getXAccountConfig } from '../../config/data-sources-config';

import { envConfig } from '../../config/environment';

import logger from '../logger';

import { ProgressTracker } from '../../utils/progress';

import { config } from 'dotenv';

// Load environment variables

config({ path: '.env.local' });

interface RateLimitInfo {

limit: number;

remaining: number;

reset: number; // Unix timestamp

}

export class TwitterClient {

private client: TwitterApiReadOnly;

private rateLimitInfo: Map<string, RateLimitInfo> = new Map();

constructor() {

// For Twitter API v2, we need Bearer Token for OAuth 2.0 Application-Only auth

const bearerToken = process.env.X_BEARER_TOKEN;

const apiKey = process.env.X_API_KEY;

const apiSecret = process.env.X_API_SECRET;

// Try Bearer Token first (recommended for v2 API)

if (bearerToken) {

this.client = new TwitterApi(bearerToken).readOnly;

}

// Fallback to App Key/Secret (OAuth 1.0a style)

else if (apiKey && apiSecret) {

this.client = new TwitterApi({

appKey: apiKey,

appSecret: apiSecret,

}).readOnly;

}

else {

throw new Error('Missing Twitter API credentials. Need either X_BEARER_TOKEN or both X_API_KEY and X_API_SECRET in .env.local file.');

}

logger.info('Twitter client initialized with proper authentication');

}

/**

* Fetch tweets from a specific user

*/

async fetchUserTweets(username: string): Promise<TweetWithEngagement[]> {

// Check API quota before starting expensive operations

await this.checkApiQuota();

const config = getXAccountConfig(username);

const progress = new ProgressTracker({

total: config.maxPages,

label: `Fetching tweets from @${username}`

});

try {

// Check rate limits before starting

await this.checkRateLimit('users/by/username/:username/tweets');

// Get user info first

const user = await this.getUserByUsername(username);

if (!user) {

throw new Error(`User @${username} not found`);

}

const allTweets: TweetWithEngagement[] = [];

let nextToken: string | undefined;

let pagesProcessed = 0;

// Paginate through tweets (with conservative limits)

const maxPagesForTesting = Math.min(config.maxPages, 2); // Limit to 2 pages for testing

for (let page = 0; page < maxPagesForTesting; page++) {

progress.update(page + 1);

const tweets = await this.fetchTweetPage(user.id, {

max_results: Math.min(config.tweetsPerRequest, 10), // Limit to 10 tweets per request

pagination_token: nextToken,

});

if (!tweets.data?.data?.length) {

logger.info(`No more tweets found for @${username} on page ${page + 1}`);

break;

}

// Process and filter tweets

const processedTweets = tweets.data.data

.map((tweet: TweetV2) => this.enhanceTweet(tweet, user))

.filter((tweet: TweetWithEngagement) => this.passesQualityFilter(tweet, config));

allTweets.push(...processedTweets);

pagesProcessed = page + 1;

// Check if there are more pages

nextToken = tweets.meta?.next_token;

if (!nextToken) break;

// Respect rate limits with longer delays

await this.waitForRateLimit();

}

progress.complete(`Collected ${allTweets.length} quality tweets from @${username}`);

logger.info(`Successfully fetched tweets from @${username}`, {

total_tweets: allTweets.length,

pages_fetched: pagesProcessed,

api_calls_used: pagesProcessed + 1 // +1 for user lookup

});

return allTweets;

} catch (error: any) {

progress.fail(`Failed to fetch tweets from @${username}: ${error.message}`);

logger.error(`Twitter API error for @${username}`, error);

throw error;

}

}

/**

* Get user information by username

*/

private async getUserByUsername(username: string): Promise<TwitterUser | null> {

try {

const response = await this.client.v2.userByUsername(username, {

'user.fields': [

'description',

'public_metrics',

'verified'

]

});

return response.data ? {

id: response.data.id,

username: response.data.username,

name: response.data.name,

description: response.data.description,

verified: response.data.verified || false,

followers_count: response.data.public_metrics?.followers_count || 0,

following_count: response.data.public_metrics?.following_count || 0,

} : null;

} catch (error) {

logger.error(`Failed to fetch user @${username}`, error);

return null;

}

}

/**

* Fetch a single page of tweets

*/

private async fetchTweetPage(userId: string, options: any) {

return await this.client.v2.userTimeline(userId, {

...options,

'tweet.fields': [

'created_at',

'public_metrics',

'entities',

'context_annotations'

],

exclude: ['retweets', 'replies'], // Focus on original content

});

}

/**

* Enhance tweet with additional data

*/

private enhanceTweet(tweet: TweetV2, user: TwitterUser): TweetWithEngagement {

const engagementScore = this.calculateEngagementScore(tweet);

const qualityScore = this.calculateQualityScore(tweet, user);

return {

id: tweet.id,

text: tweet.text,

author_id: tweet.author_id!,

created_at: tweet.created_at!,

public_metrics: tweet.public_metrics!,

entities: tweet.entities,

context_annotations: tweet.context_annotations,

// Enhanced fields

author_username: user.username,

author_name: user.name,

engagement_score: engagementScore,

quality_score: qualityScore,

processed_at: new Date().toISOString(),

};

}

/**

* Calculate engagement score (simple metric)

*/

private calculateEngagementScore(tweet: TweetV2): number {

const metrics = tweet.public_metrics;

if (!metrics) return 0;

// Weighted engagement score

return (

metrics.like_count +

(metrics.retweet_count * 2) + // Retweets worth more

(metrics.reply_count * 1.5) + // Replies show engagement

(metrics.quote_count * 3) // Quotes are highest value

);

}

/**

* Calculate quality score based on multiple factors

*/

private calculateQualityScore(tweet: TweetV2, user: TwitterUser): number {

let score = 0.5; // Base score

// Text quality indicators

const text = tweet.text.toLowerCase();

// Positive indicators

if (tweet.entities?.urls?.length) score += 0.1; // Has links

if (tweet.entities?.hashtags?.length && tweet.entities.hashtags.length <= 3) score += 0.1; // Reasonable hashtags

if (text.includes('?')) score += 0.05; // Questions engage

if (tweet.context_annotations?.length) score += 0.1; // Twitter detected topics

// Negative indicators

if (text.includes('follow me')) score -= 0.2; // Spam-like

if (text.includes('dm me')) score -= 0.1; // Promotional

if ((tweet.entities?.hashtags?.length || 0) > 5) score -= 0.2; // Hashtag spam

// Author credibility

if (user.verified) score += 0.1;

if (user.followers_count > 10000) score += 0.1;

if (user.followers_count > 100000) score += 0.1;

// Engagement factor

const engagementRatio = this.calculateEngagementScore(tweet) / Math.max(user.followers_count * 0.01, 1);

score += Math.min(engagementRatio, 0.2); // Cap the bonus

return Math.max(0, Math.min(1, score)); // Keep between 0 and 1

}

/**

* Check if tweet passes quality filters

*/

private passesQualityFilter(tweet: TweetWithEngagement, config: any): boolean {

// Length filter

if (tweet.text.length < config.minTweetLength) {

return false;

}

// Engagement filter

if (tweet.engagement_score < config.minEngagementScore) {

return false;

}

// Quality filter (can be adjusted)

if (tweet.quality_score < 0.3) {

return false;

}

return true;

}

/**

* Rate limiting management

*/

private async checkRateLimit(endpoint: string): Promise<void> {

const rateLimit = this.rateLimitInfo.get(endpoint);

if (!rateLimit) return; // No previous info, proceed

const now = Math.floor(Date.now() / 1000);

if (rateLimit.remaining <= 1 && now < rateLimit.reset) {

const waitTime = (rateLimit.reset - now + 1) * 1000;

logger.info(`Rate limit reached for ${endpoint}. Waiting ${waitTime}ms`);

await new Promise(resolve => setTimeout(resolve, waitTime));

}

}

private async waitForRateLimit(): Promise<void> {

// Much more conservative delay between requests to preserve API quota

const delay = envConfig.development ? 3000 : 5000; // 3-5 seconds between requests

logger.info(`Waiting ${delay}ms to respect rate limits...`);

await new Promise(resolve => setTimeout(resolve, delay));

}

/**

* Check API quota before making expensive calls

*/

private async checkApiQuota(): Promise<void> {

try {

// Get current rate limit status

const rateLimits = await this.client.v1.get('application/rate_limit_status.json', {

resources: 'users,tweets'

});

logger.info('API Quota Check:', rateLimits);

// Warn if approaching limits

const userTimelineLimit = rateLimits?.resources?.tweets?.['/2/users/:id/tweets'];

if (userTimelineLimit && userTimelineLimit.remaining < 10) {

logger.warn('⚠️ API quota running low!', {

remaining: userTimelineLimit.remaining,

limit: userTimelineLimit.limit,

resets_at: new Date(userTimelineLimit.reset * 1000).toISOString()

});

console.log('⚠️ WARNING: Twitter API quota is running low!');

console.log(` Remaining calls: ${userTimelineLimit.remaining}/${userTimelineLimit.limit}`);

console.log(` Resets at: ${new Date(userTimelineLimit.reset * 1000).toLocaleString()}`);

}

} catch (error) {

// If quota check fails, proceed but with warning